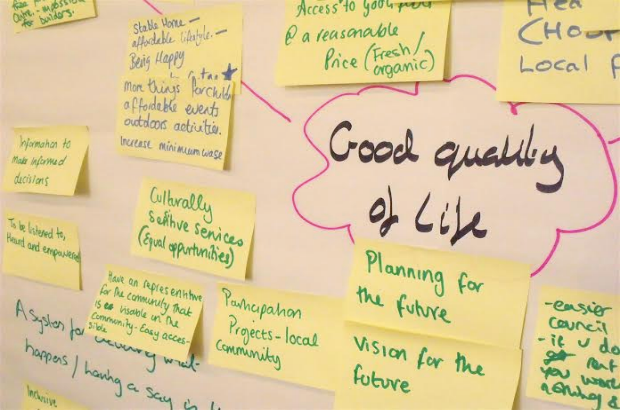

Last year the Cabinet Office teamed up with the Department for Communities and Local Government to explore how Community Organisers could support local communities in exercising the rights introduced in the Localism Act 2011. A Community Organiser Mobilisation fund was established to resource a programme of work delivered by CO Ltd and Locality. The Analysis and Insight Team supported the evaluation of the programme and in this blog we share the results.

The Localism Act

The Localism Act was introduced in 2011 to give communities more say and greater power in shaping their areas such as what happens to local amenities, the delivery of services and the planning of new development. The Act, and related legislation that has come into force since, has given communities new rights including:

- Right to bid for community buildings and facilities that are important to the community;

- Right to challenge to bid to run a local authority service where communities believe they can do so differently and better;

- Right to build small-scale, site-specific, community-led developments;

- Right to reclaim land by asking that under-used or unused land owned by public bodies is brought back into beneficial use;

- Neighbourhood planning to allow communities to come together to prepare neighbourhood plans and shape new development.

Community Organiser Mobilisation Fund Programme outline

Since the Localism Act there has been ongoing support available to communities to help navigate and exercise the rights including provision of information, advice and guidance. The aim of the Community Organiser Mobilisation Fund was to explore what role, if any, Community Organisers (COs) could play in supporting communities, by building on the success of the Government’s preceding Community Organiser programme which ended in 2015.

The programme was eight months long during which a selection of COs were trained in Community Rights and then worked in their local areas to explore how the rights could help communities tackle important local issues. Organisers attended a residential event and follow-on specialist training. They were supported by a Programme Manager and had regular monthly online supervision sessions.

Through the programme the evaluators Imagine conducted surveys of participating COs, conducted interviews, developed case studies in a small selection of areas, ran a series of workshops to reflect on lessons learned, and assessed programme monitoring information.

Achievements and lessons learned

In total 38 COs participated working in 27 neighbourhoods in England. On average they listened to around 200 people each, organised 270 events and recruited over 1200 volunteers to get involved in community priorities. At the end of the programme in June 2016 there were 16 COs working on Neighbourhood Plans; 7 exploring the right to build; 9 the right to bid; 3 the right to reclaim land; and 5 the right to challenge.

Residents in the 27 communities were generally unaware of their rights under the 2011 Act and the evaluation found that COs were particularly successful at raising awareness and knowledge. They were also successful at linking the use of rights to issues that residents were concerned about. In fact, COs found Community Rights a useful lens through which to view issues and with which to engage local people.

Whilst there were other successes, such as buildings being listed as Assets of Community Value (ACV’s) and neighbourhood forums being established, successfully exercising the rights within the 8-months programme was always going to be a challenge. The Organisers did attract volunteers to assist with their projects but it became clear that the length of time and sustained effort required to exercise the rights was an issue. Furthermore, the case studies highlighted the gap in resources and expertise that can exist between residents on the one hand and planners and developers on the other.

Next Steps – Mainstreaming Community Rights training in Organising

The evaluation has identified some of the difficulties in exercising rights and the evaluators provide recommendations for tackling these issues. They provide excellent food for thought but further, stronger evidence is required than was achievable in this short time frame, to support implementation of some of these recommendations.

What is clear, however, is that Community Organisers can narrow the gap considerably between theory and practice. Community Rights are an important tool in the CO’s toolkit for engaging local people and addressing issues. Furthermore there now exists a cohort of skilled COs together with training materials to support mainstreaming the rights into the training and development of COs as government takes forward its commitment to expand COs during this parliament. It is important to ensure the learning from this evaluation is embedded in future work.

Leadership of Community Organisers has moved out of Cabinet Office to the Department for Culture, Media and Sport, and we wish the team well with the delivery of the future programme and its evaluation.

Here is the evaluation report [051216-comfundevaluationreport_final]. If you have questions or views please do get in touch and we will pass on to the relevant team.

Want a more insightful 2017? Subscribe to the Analysis and Insight blog.

]]>

The double benefit –there was a positive impact on both those who took part and those who were recipients, with the majority of beneficiaries being positive about the impact of the youth social action. They generally had a more positive impression of what young people contribute and 80% reported feeling more proud of their local area.

The double benefit –there was a positive impact on both those who took part and those who were recipients, with the majority of beneficiaries being positive about the impact of the youth social action. They generally had a more positive impression of what young people contribute and 80% reported feeling more proud of their local area. Intergenerational relationships – findings suggest that social action can help beneficiaries meet new people, with 90% speaking to a young participant. Those who did were also more likely to report the social action as very worthwhile.

Intergenerational relationships – findings suggest that social action can help beneficiaries meet new people, with 90% speaking to a young participant. Those who did were also more likely to report the social action as very worthwhile. Engagement – the evaluation also demonstrated that even those who were not actively engaged in their communities experienced positive benefits of youth social action.

Engagement – the evaluation also demonstrated that even those who were not actively engaged in their communities experienced positive benefits of youth social action. Inclusion and engagement– case studies highlighted that membership to Uniformed Youth groups can be accessible and appealing to a broad group of young people, and it was not difficult to get hard-to-reach young people engaged.

Inclusion and engagement– case studies highlighted that membership to Uniformed Youth groups can be accessible and appealing to a broad group of young people, and it was not difficult to get hard-to-reach young people engaged. Schools – There were a number of advantages to working through schools, including good access to the children and buildings fit for purpose.

Schools – There were a number of advantages to working through schools, including good access to the children and buildings fit for purpose.

Volunteering

Volunteering Charitable giving

Charitable giving Neighbourhood

Neighbourhood  Civic Engagement

Civic Engagement Wellbeing

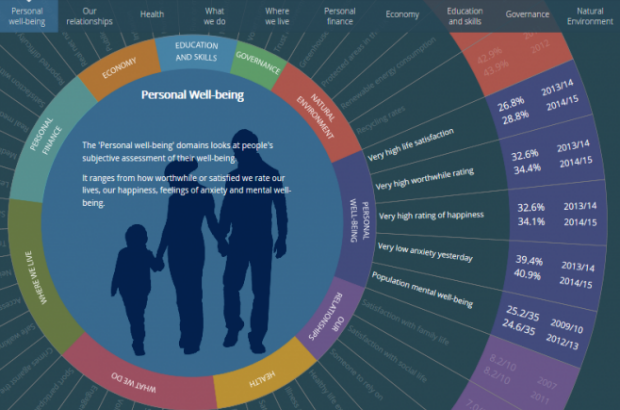

Wellbeing